Neural implicit shape representations are an emerging paradigm that offers many potential benefits over conventional discrete representations, including memory efficiency at a high spatial resolution. Generalizing across shapes with such neural implicit representations amounts to learning priors over the respective function space and enables geometry reconstruction from partial or noisy observations. Existing generalization methods rely on conditioning a neural network on a low-dimensional latent code that is either regressed by an encoder or jointly optimized in the auto-decoder framework. Here, we formalize learning of a shape space as a meta-learning problem and leverage gradient-based meta-learning algorithms to solve this task. We demonstrate that this approach performs on par with auto-decoder based approaches while being an order of magnitude faster at test-time inference. We further demonstrate that the proposed gradient-based method outperforms encoder-decoder based methods that leverage pooling-based set encoders.

Reconstructing SDFs from Dense Samples

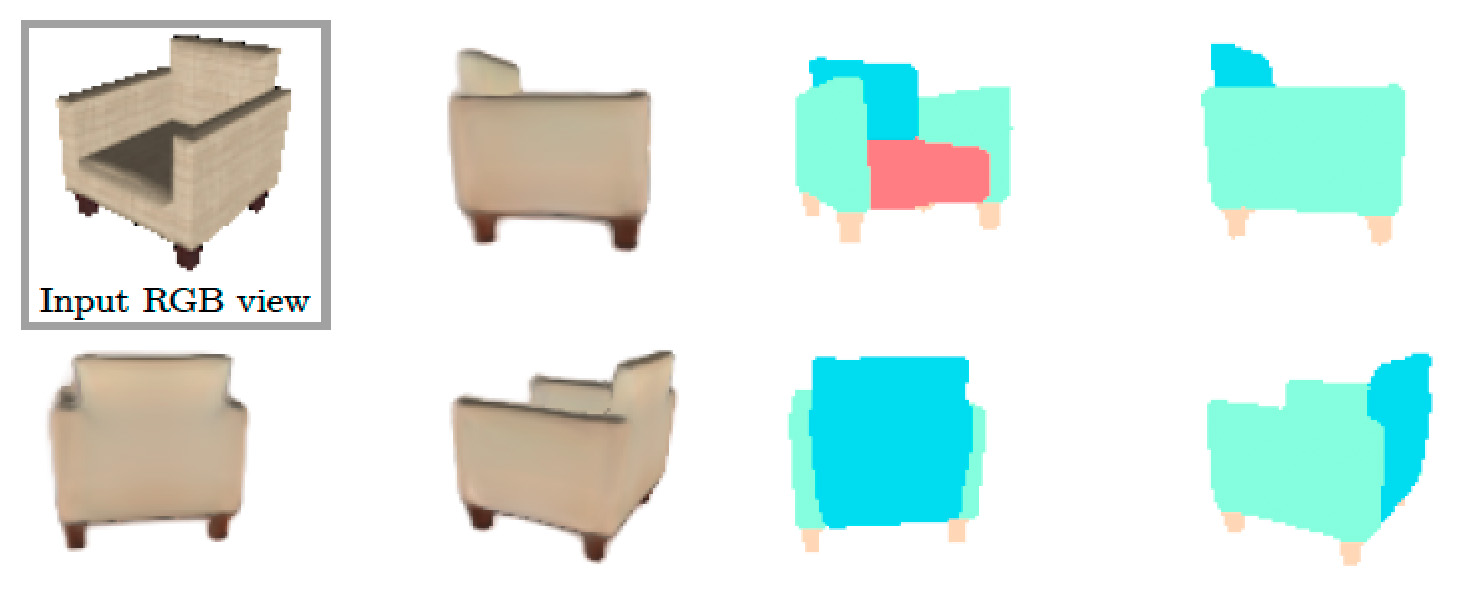

We can recover an SDF by supervising with dense, ground-truth samples from the signed distance function, as proposed in DeepSDF, or with a point cloud taken from the zero-level set (mesh surface), similar to a PointNet encoder.

Ground Truth

DeepSDF

MetaSDF

Reconstructing SDFs from a Surface Point Cloud

We can recover an SDF by supervising with dense, ground-truth samples from the signed distance function, as proposed in DeepSDF, or with a point cloud taken from the zero-level set (mesh surface), similar to a PointNet encoder.

Ground Truth

PointNet

MetaSDF Lvl.

Related Projects

Check out our related projects on the topic of implicit neural representations!