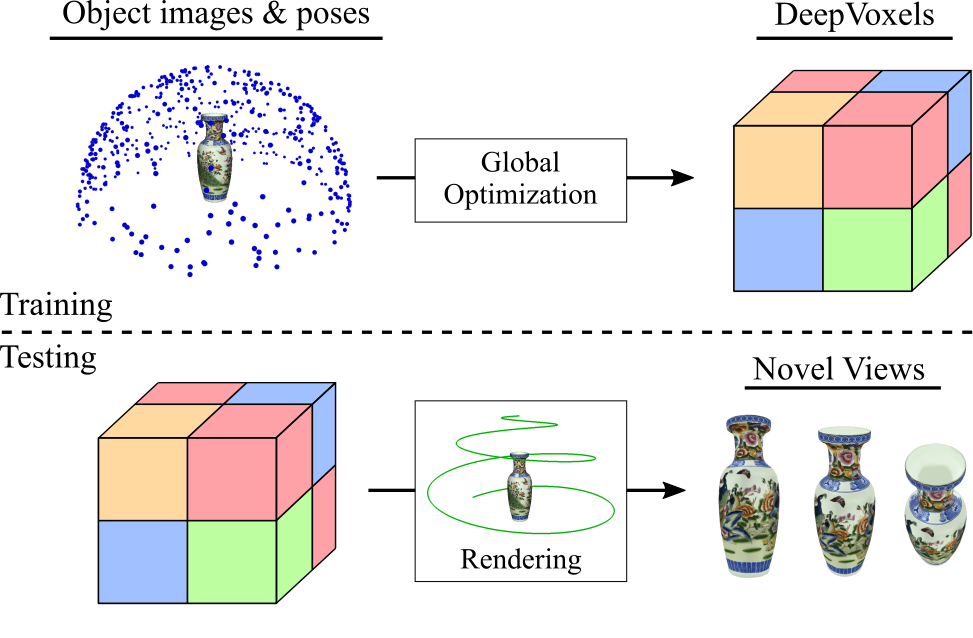

I am an Assistant Professor at MIT EECS, where I am leading the Scene Representation Group. Previously, I did my Ph.D. at Stanford University as well as a Postdoc at MIT CSAIL. My research interest lies in neural scene representations - the way neural networks learn to represent information on our world. My goal is to allow independent agents to reason about our world given visual observations, such as inferring a complete model of a scene with information on geometry, material, lighting etc. from only few observations, a task that is simple for humans, but currently impossible for AI.